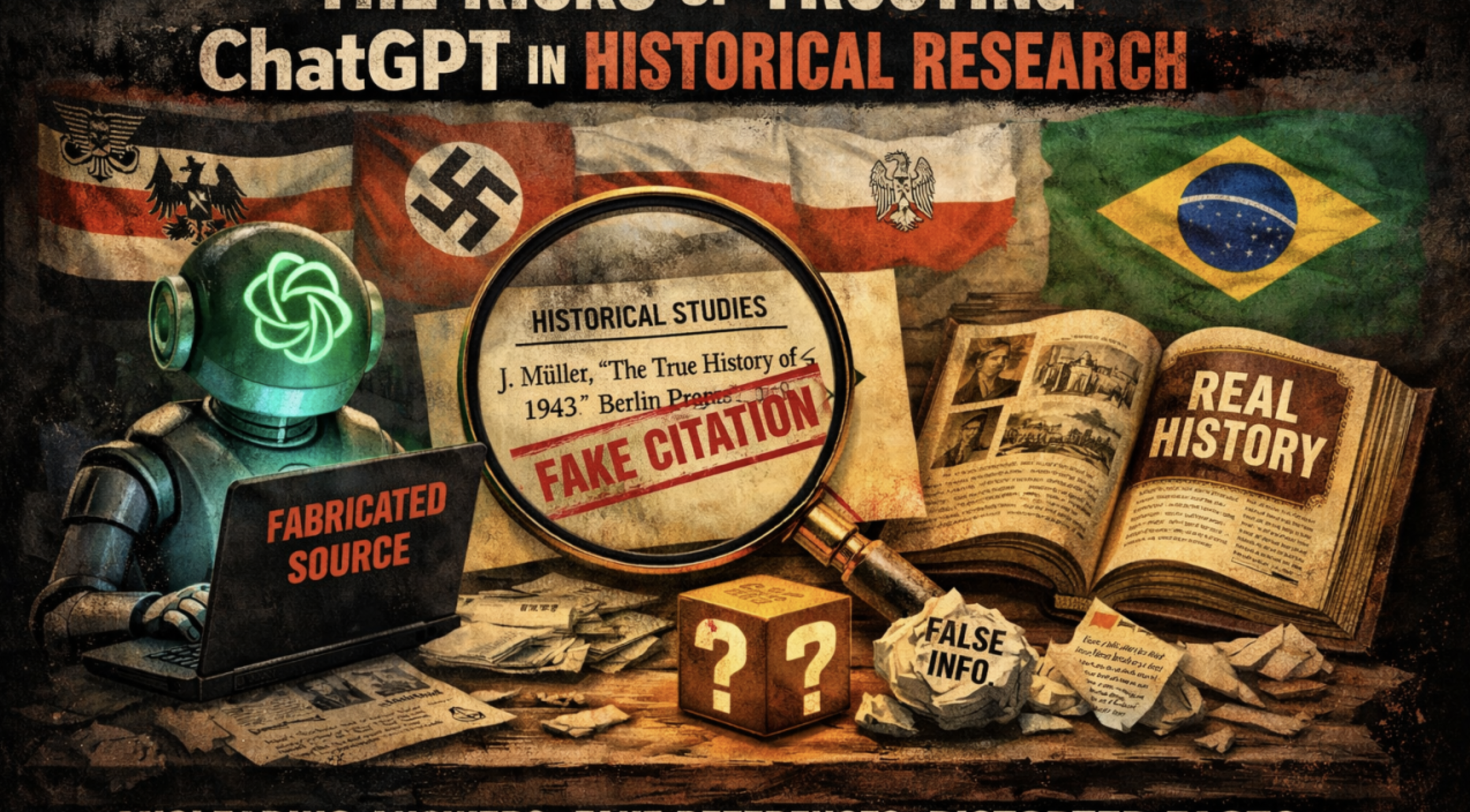

The Risks of Relying on ChatGPT in Historical Research

The use of artificial intelligence tools such as ChatGPT for historical research is becoming increasingly common. The promise is appealing: fast answers, clear language, and immediate access to large volumes of information. However, this convenience hides risks that cannot be ignored.

The first problem is the illusion of authority. Text generated by ChatGPT presents itself as well-structured, coherent, and convincing – but the substance does not always match this form. In many cases, facts are softened. When using ChatGPT, for example, to research German history, it becomes evident that initial responses tend to present more moderate and less incisive versions of events.

Only when confronted with documented facts from serious scholarly sources and deeper academic research does the system adjust its responses, becoming more precise. This suggests that, at first, it may provide a superficial layer that is distant from the full harshness of historical reality. To achieve greater depth, it is necessary to question, confront, and demand rigor.

This dynamic raises an important concern: the system’s intelligence appears, at times, to be oriented more toward avoiding confrontation with the harshness of historical facts than toward presenting them clearly – even when dealing with widely documented events.

Another central risk is the tendency toward simplification. History – especially German history – is marked by events of extreme gravity, complexity, and responsibility. However, even when there is a strong scientific basis and publicly accessible sources, responses may present these facts in an overly softened manner, diluting tensions and reducing their severity. In some cases, this can create the impression that certain aspects remain debatable, when they have already been firmly established by historiography.

This issue becomes even more sensitive when addressing topics such as the history of Germans in southern Brazil, the occupations of Poland, the period of the Second and Third German Empire, the German genocide in its African colonies, Nazism and the Holocaust, as well as occupations during World War II in Greece, Ukraine, and Soviet territories. These events require absolute historical rigor. Any approach that softens, relativizes, or treats them with excessive neutrality compromises the understanding of their true magnitude.

There is also the problem of the lack of verifiable references – and, in some cases, the presentation of references that simply do not exist. This is not a minor flaw, but a serious distortion of reliability. Unlike academic work, which allows the reader to verify claims, ChatGPT can simulate authority without providing real, traceable sources.

Furthermore, ChatGPT seems not to “understand” history – it reorganizes information based on learned language patterns. It does not critically analyze like a historian but produces plausible responses. This can result in narratives that appear complete but are simplified, with relevant omissions or framings that do not fully reflect the complexity of the facts.

Finally, there is a more subtle risk: the replacement of critical thinking. When users begin to trust responses automatically, they stop questioning, comparing, and investigating independently. Historical research, which should be an active and rigorous process, becomes passive consumption of information.

Artificial intelligence – and ChatGPT in particular – can be a useful tool, especially for grammatical correction and stylistic improvement. However, it should not be treated as a reliable source for historical research. In the field of history, trusting without questioning is not just a methodological error; it is a risk to the understanding of reality itself.